Прототип распределенной бортовой вычислительной системы

|

||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|

Introduction |

4 |

|

|

1. |

Analysis

of Requirements to Onboard Control Complex …... |

5 |

|

2. |

Block Structure and Content of Base Small SpaceCraft

(SSC) Complex……………………………………………… …. |

5 |

|

3. |

Contents

and Functions of Systems………………………….. |

6 |

|

|

3.1. Power Supply System………………………………… ….. |

6 |

|

|

3.2. SSC Control Unit…………………………………………… |

6 |

|

|

3.3. Scientific Equipment Complex……………………………. |

8 |

|

|

3.4. Thermal Control System…………………………………... |

8 |

|

|

3.5. Communication System…………………………………… |

8 |

|

|

3.6. Attitude Determination and Control System…..………… |

8 |

|

|

3.7. Pyrotechnics and Separation Contacts System………… |

8 |

|

4. |

Definition of Architecture and Block Structure of

Onboard Computing System……………………………………………… |

9 |

|

|

4.1. Electronic Boards………………………………………….. |

10 |

|

|

4.2. Computing Module………………………………………… |

10 |

|

|

4.3. Layout of Computing Module…………………………….. |

11 |

|

|

4.4. Storage Devices Module………………………………….. |

12 |

|

|

4.5. Interface Module…………………………………………… |

12 |

|

|

4.6. Power Supply Module……………………………………... |

13 |

|

5. |

Operation Principles and Data Flows.………………………... |

13 |

|

|

5.1. Optical Module of Hyper-Spectral System……………… |

13 |

|

|

5.2. Computing Module………………………………………… |

14 |

|

6. |

Trunk Interface…………………………………………………... |

15 |

|

|

6.1.Contents of Interface Technical

Facilities………………... |

15 |

|

|

6.2. Information Exchange and Data Transfer

Control Pattern…………………………………………………………… |

16 |

|

|

6.3. Transceiving of Data……………………………………… |

17 |

|

|

6.4. Interlevel Exchange……………………………………….. |

18 |

|

|

6.5. 8B/10B Coding…………………………………………….. |

18 |

|

|

6.6. Receiving Side Decoding………………………………… |

18 |

|

7. |

Data link (DL) features…………………………………………. |

18 |

|

|

7.1.

Rocket I/O Properties……………………………………… |

18 |

|

|

7.2.

Architecture Survey………………………………………... |

19 |

|

|

7.2.1. Inner Frequency

Synthesizer……………………… |

21 |

|

|

7.2.2. Transmitter………………………………………….. |

21 |

|

|

7.2.3. Output FIFO

buffer…………………………………. |

22 |

|

|

7.2.4. Serializer…………………………………………….. |

22 |

|

8. |

Redundancy

Interface………………………………………….. |

23 |

|

|

Conclusions……………………………………………………… |

24 |

|

|

References………………………………………………………. |

24 |

Introduction

According to the project №2323 «The development of the prototype of

distributed fault tolerant on board computing system for satellite control

system and the complex of scientific equipment» of the International Scientific

and Technical Center the work on development of software and hardware parts of

mentioned prototype is carrying out in the Keldysh Institute of Applied

Mathematics of RAS and the Space Research Institute of RAS together with the

Fraunhofer institute Rechnerarchitektur und Softwaretechnik (FIRST, Berlin,

Germany) work on development of software and hardware for the above prototype.

The preprint describes the project’s hardware worked out by now.

The project envisages development of a fail-safe

distributed onboard computer system prototype to control a spacecraft and the

scientific equipment complex carrying out the hyper-spectral remote sensing of

the Earth.

The onboard control complex and scientific equipment control system

demand high rate of reliability and robustness against various factors.

Fulfillment of these requirements needs a variety of approaches to resolve the

instrumental implementation.

The preprint describes several units of the on-Board Computing System

(BCS) prototype resistant to some separate failures.

The BCS should be a distributed multi-computer system accomplishing

entire steering, telemetry and monitoring functions as well as all application

functions typical for scientific equipment and computer controlled complex.

Amalgamation of different computing functions on a spacecraft board into a single

system with a high redundancy rate allows both tight interworking between

various processes and optimizes flexible utilization of the reserved computer

resources to execute different tasks depending on the operation requirements

and the necessary failure resistance level.

The BCS architecture corresponds to a homogeneous symmetrical

multi-computer system i.e. it comprises several (from 3 to 16) similar nodal

computers interconnected by redundant data-links. The computer modules are

instrumentally identical and differ only by functions fulfilled. For the

redundancy sake one and the same function could be fulfilled by at least two

modules.

1. Analysis of requirements

to onboard control complex

The developed onboard complex is devised to control Small SpaceCraft

(SSC) conducting scientific and technological experiments at the near-Earth

orbits and the hyper-spectral Earth monitoring in particular. Analysis of the

necessary structure and composition of the base SSC have been made to deduce

preliminary specifications for the onboard control complex and its

modules/units.

2. Block Structure and

Content of the Base SSC Complex

The complex provides for control and survivability of SSC during its

boost to orbit and through the life time of the regular SSC systems and the

Scientific Equipment (SE). Along with this the complex together with sensors

and actuators of SSC must accomplish the following tasks:

● power supply system control

● spatial orientation monitoring

● spatial orientation regulation

● heat setting

● execution of systems’ initialization profile and failure cases

reconciliation.

● SSC operation profile maintenance during its regular orbiting

● maintenance of SSC control option to be commanded via a radio

link with the ground based complexes.

● accumulation and sending of service data via telemetry channels

● accumulation and sending of scientific data via telemetry

channels

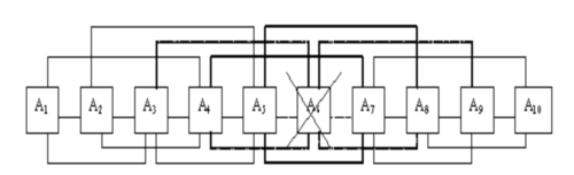

Figure 1 gives a base composition of the complex. The given

abbreviations are expanded below in description of separate units.

Fig.1. SSC base equipment complex

3. Contents and Functions of

Systems

3.1. Power Supply

System

The Power Supply System (PSS)

distributes and provides feeding voltages to SSC consumers including SE. PSS

controls operation and charging conditions of accumulator batteries (AB),

switches currents between solar panels and AB’s depending on the illuminated

and shadowed orbit sectors. All functionally complete SSC devices should have

their own autonomous secondary power supply sources (SPSS) with galvanic

isolation from the mains. A special PSS setup protects a separate consumer in

case its output circuit is short (SC). The PSS should also control system’s

operational integrity and switch the redundant units (if any available).

3.2. SSC Control

Unit

A control unit (CU) relay should

be envisaged, if redundant SSC CU’s are engaged, for failure emergency cases.

SSC CU should provide:

●operation and interaction algorithm during the regular

space-borne life time of SSC

●program and data store access control logic

●program and data store majorization

●board timer-calendar schedule

●sensors and actuators data exchange interface

●accumulation of telemetry data, service and scientific

information

Programs and tasks are executed under control of a real time operation

system. The operation system monitors execution of the tasks and reconciles

conflicts emerging on faults.

List of the executed tasks:

●initialization and test of

SSC system

●execution of the assigned

orbiting profile

●operability control of SSC

systems

●operation control of

scientific equipment

●reception of control

commands from the ground based control stations

●picking up and transmission

of telemetry information

●SSC orientation control

●SSC heat setting

The information exchange data link protocol for SSC should have fault

recovery algorithms.

The CU should provide data link information exchange, process the

received from the Earth information, and compile SSC control profiles.

Integrity of the data exchange via actual communication channel requires the CU

to also prepare and code the data.

3.3. Scientific

Equipment Complex

Scientific Equipment Complex (SEC) should correspond to tasks and

purposes of scientific experiment. The SEC should be implemented according to

requirements agreed with the SSC engineers at the draft project stage.

3.4. Thermal

Control System

Thermal Control System comprises thermal sensors and heating elements

placed on the SSC body according to heat calculation. The system controls the

thermal condition of SSC operation.

3.5.

Communication System

The system provides uplink for

control telecommands and downlink for service and scientific telemetry data of

SSC.

3.6. Attitude

Determination and Control System

Attitude Determination and Control System consists of:

● two triaxial angular

velocity sensors (AVS)

● two solar sensors (SS) to

determine the Sun position coordinates

● attitude control

thrusters.

The system is designated to keep up the SSC axial stability and sunward

orientation according to the measurement data received from SS and AVS.

3.7. Pyrotechnics and

Separation Contacts System

Pyrotechnics and Separation Contacts System consists of:

● contact or magnetic

relative position sensors of SSC mechanic units

● squibs, pyrobolts and

pyrovalves.

The system is intended to execute the stage separation when SSC is

boosted and deployment of the regular SSC systems when in orbit.

4. Definition of Architecture and Block

Structure

of Onboard Computing System

A possible scientific task for orbital remote sensing of the Earth by a

high resolution hyper-spectrometer [1, 2, 3] (hereinafter – instrument) was

chosen to analyze architecture requirements to the onboard computing system.

The hyper-spectral data source is supposed be a high resolution

(1280x1024) CCD matrix with the maximum frame rate of up to 450Hz. The

hyper-spectral data is stored in hard disks with ATA3 interface. An embedded

computer of PC/104+ standard, linked up to the mezzanine connector of Board A,

is used for system debugging and service computer interface via Ethernet 10/100

protocol.

The hyper-spectral complex electronic part comprises the following

modules:

- computing module;

- storage modules;

- interface module;

- power supply module.

Interconnection

diagram of the complex modules is given in Figure 2.

Fig.2. Interconnection diagram of the complex modules

4.1. Electronic Boards

Guiding development principle for electronic modules was the boards’

versatility. This means that one and the same board may be used for different

applications. The entire complex involves only four types of specialized boards

as a result.

Board A is the basic board of the complex. The board has Virtex-II-Pro

PLM chip, DSP TMS320C6416, SDRAM 256 MB memory module, and connectors for mezzanine

modules (PC/104+ sockets) and for the backplane (Compact PCI slot).

Board B is an auxiliary board of the complex. The board has connectors

for linking with Board A and other cables.

Board C is the backplane for boards A of the computing module. The board

has interface connectors for Boards A and power supply cables.

Board D is the key board of the hyper-spectral system’s optical part.

The board has LUPA-1300 CCD matrix, fast ADCs, buffer amplifiers as well as

interface connectors with Board A and other cables.

4.2. Computing Module

Analysis of requirements stated for the system showed that conventional

PCI and Compact PCI buses do not provide for necessary throughput. Moreover,

use of the mentioned standards compels either to limit the boards’ number or

apply bridge connectors what would tell upon the bus’s throughput for boards

interworking via the bridge. Besides, the «multidrop» architecture implemented

in the above buses is seen to be less suitable because of its high complexity

and low scalability.

A “point-to-point” architecture was suggested to surmount the noted

limitations. This means that communication is set between two terminal devices.

4.3. Layout of Computing

Module

The Computing Module is implemented in Compact PCI 3U format. This

format was chosen due to the following reasons:

- the Computing Module’s housing complies with “Euromechanics” standard

allowing its assembly from relatively inexpensive widely used parts;

- the involved high density connectors have low inductance and acceptable

impedance for use in high-speed data-links;

- large quantity of connectors’ “ground” pins provides for steady

digital “ground” even under high external interference;

- a rear-pin option (bypass connectors) allow connection of auxiliary

boards to the rear side connectors, see Fig.3 and Fig.4.

Fig.3. Auxiliary boards connection scheme.

Complete set of computing module

Fig.4. Main and auxiliary boards

connection diagram

The Computing Module is completed with Boards A and Boards B. Number of

Boards A depends on the computing power needed, and number of Boards B depends

on – the connected devices quantity. Besides, the Computing Module includes a

PC/104+ standard computer serving control and debugging of the system as well

as communication with other complex systems via Ethernet 10/100 protocol.

4.4. Storage Devices Module

The Storage Devices Module comprises rack-mounted magnetic storage

devices of the “hard-disk” type. This type of build-up allows installation of

the required array of hard-disks. Besides, the disks are either easily

dismounted or replaced. The rack containers are supplied with a fan and

temperature monitor to avoid overheating.

4.5. Interface Module

The Interface Module (used for debugging) consists of a sensor panel

TFT-display connected to computer mounted on the computing module control

board. The Interface Module provides for runtime monitoring and can either be

used for debugging and setting up of the soft- and hard-ware means of the

complex.

4.6. Power Supply Module

The Power Supply Module converts the input voltage to operating voltages

of the complex: +5V and +12V. Other voltages consumed by different complex

components are formed directly at the place of use. The Power Supply Module’s

boards have high stability converters and the boards are amalgamated in the way

making it possible to scale the total power capacity as a function of the load

needed.

5. Operation principle and

data flows

First let us consider the hyper-spectral complex operation principles in

terms of the optical module electronic part. The computing module details are

discussed further on.

5.1. Optical Module of

Hyper-Spectral System

The hyper-spectral system’s optical module comprises four Boards D with

a Board A attached to each one.

The module’s optical elements frame an image on the CCD matrix surface

where it is fixed and transferred to Board A at the board’s request control

signals. Then the image is passed over to the computing module via the dual

Rocket I/O line.

Each CCD matrix has the resolution of 1280×1024 with the dynamic

range of 1000 (10 bits) and provides shooting with the frame rate of 450 fps.

Therefore, one Board B receives not more than:

1280×1024 (resolution)

× 10 (dynamic range, bit)

× 450 (maximum frames per second)

= 5.6 Gb/s.

Such data stream is input to Board A. The Board A converts the data to

serial code and transfers it to a computing module via the Rocket I/O dual

lines. One Rocket I/O line has the throughput of 0.65 up to 3.125 GB/s so the

full scale operation does not require any data compression what lowers the

computing module’s load. Nevertheless some small preprocessing or data

compression should rather be made to escape operation at the throughput limit.

5.2. Computing Module

The Computing Module consists of not more then 9 Boards A while each of

them is capable of connecting with Board B. Besides, a PC104+ form factor

computer or an extra mezzanine computer (not described in the preprint) is

mountable to the Board’s A mezzanine connector.

The computing module’s Board C (backplane), carrying 6 Rocket I/O lines

as shown in Fig.5, is connected to Board A from one side. This allows

communication with adjacent boards via fast serial links. From the other side

the board is connected to Board B providing Board A with an interface to

external devices.

If any two Boards A fail the module would still be operable. The Boards’

A interwork would detour the malfunctioning boards.

Рис.5. Boards A communication

diagram

The Computing Module’s Boards A serve different functions depending on

their assignment. The main functions with descriptions of their work and data

streams are discussed below.

Computing Module’s Boards A compose three units:

- data acquisition unit,

- data processing unit

- control and exchange unit.

The Boards A composing the data acquisition unit are connected to the

optical module via Boards B and Rocket I/O lines. Main function of these boards

is accumulation, buffering and transfer of data to the data processing unit.

Apart from, the boards transfer data to the storage module generating commands

for writing data to hard drives.

The Boards A composing the data processing unit handle data by working

algorithms to pass the results over to control and exchange unit.

The Boards A composing control and exchange unit interact with PC/104

computer providing reception of commands and data transfer from other systems

of the complex.

6. Trunk

Interface

Trunk

interface with decentralized control used in the electronic modules system

provides for to the following requirements:

Adopted

abbreviations:

DL

– data links

GCB

- General Communication Bus

Aurora

[4]– soft-and-hardware interface

Rocket

I/O [5] – transceiver standard

6.1.

Contents of Interface Technical Facilities

The

interface technical facilities include:

-

processor modules;

-

backplane;

-

I/O modules.

Number of different or similar processor

modules is up to 10

Backplane is single.

Number of I/O modules is up to 10.

Processor

modules are connected to the backplane and have no other external connections.

I/O modules are connected to the backplane and have connectors for external

devices. Quantity and buses of external devices depend on the concrete task.

The processor modules and I/O modules connection to the back-plane diagram are

given in Fig.3.

6.2.

Information Exchange and Data Transfer Control Pattern

The

information exchange is made according to Aurora protocol [4]. Aurora – is an

easy scaleable logical multilevel protocol. It is designed to arrange a data

link via one or several physical lines between two nodes. The Aurora protocol

does not depend on the chosen low level protocol. This could be either

Ethernet, Rocket I/O, et al. The protocol’s physical level isolation benefits a

designer with independence on the low level protocols development. The Aurora

protocol is an open one and is fully available for user supplements and

changes.

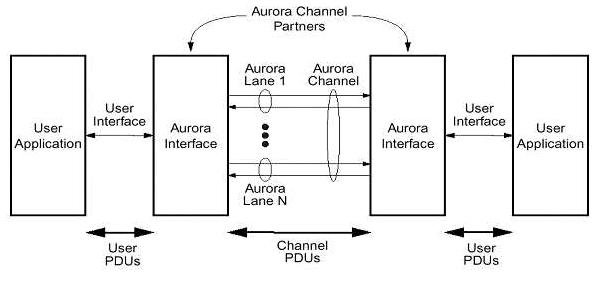

Here the data transfer process via the Aurora channels is described. An

Aurora channel may comprise several Aurora lanes. Each lane – is a

bidirectional channel for serial data transfer (DT). The communicating devices

are called Aurora partners. Fig. 6 depicts the interwork.

Fig.6. Avrora channel overview

The Aurora interface passes the information through user applications.

So the data transfer has two link types:

- between Aurora interfaces;

- between user application and Aurora interface.

In the first case data stream consists of User Protocol Data Units (PDU),

and in the second one it consists of Channel Protocol Data Units. The units may

be of any length as defined at higher level.

6.3. Transceiving

of Data

Data Flow

Management

There are five data types passed over the Aurora channel. The types are

specified in the waiting priority order – from higher to lower one:

a. Frequency compensation series. These are sets of control symbols

equalizing the node transmitters’ frequencies.

b. Interface module’s control symbols.

c. User application’s control symbols.

d. Data units.

e. Control symbols generated in the standby mode.

Transfer of user PDUs requires the following operations:

a.

Padding

b.

Adding of control separator symbols

at the units’ bounds.

c.

Special 8B/10B PDU coding

d.

Conversion to serial type and coding

of frequency.

User PDU consists of smaller information units - octets.

Padding. If unit has an odd octet

number the unit is ended with an additional octet of 0x9C.

6.4. Interlevel exchange

On entering the lower level a Channel PDU is assembled. The control data

is added to information data. Control symbols named “ordered sets” are

supplemented to the beginning and the end of the Channel PDU. The starting

control packet is called /SCP/ (/K28.2/K27.7/). The ending packet is called

/ECP/(/K29.7/K30.7/).

6.5. 8B/10B Coding

Before data is passed over to physical level the 8B/10B coding is made.

This is carried out at the special Physical Coding Sublayer (PCS). The coding

is - conversion of all octets, except for the padding ones, to symbols. This is

necessary to improve the detection efficiency at the other line end.

After coding the data sequence is transmitted to line. Data are

transmitted in “Non Return to Zero” format - NRZ. In other words the data

series have approximately equal quantity of ones and zeroes.

6.6. Receiving Side Decoding

The user PDU decoding is carried out as follows:

a. Conversion to parallel type from serial one.

b. 8B/10B decoding.

c. Extraction of control symbols on transition from lower levels to higher

ones.

d. Extraction of the padding symbols.

7. Data Link

(DL) Features

The interface physical level is implemented in

Rocket I/O standard [5]

7.1. Rocket I/O

Properties

a)

Full-duplex transceiver operates at

various rates (500 Mb/s – 3.125 Gb/s). Maximum line length is 20 inches (50cm).

b)

Monolithic clock synthesis system

and the received signal frequency recovery system with minimum number of

external electronic components. This property is especially essential when high

frequency data links are used since the received frequency may slightly deviate

from the transmitter’s clock reference frequency. Special buffers are designed

to compensate this discrepancy.

c)

Programmable voltage bias at

differential lines of Rocket I/O. There are 5 voltage bias levels possible to

smoothly be set from 800mV up to 1600mV. The bias polarity is also

programmable.

d)

Programmable special pre-emphasis

level for better electrical parameters of communication quality. Pre-emphasis

should be deemed as deliberate alteration of the transmitted signal’s shape.

When the technique is used the received signal is better identified rather then

without the pre-emphasis. Five pre-emphasis levels can be set up.

e)

Direct current isolation capability.

Necessity in it emerges when there is no DC interfacing between receiver and

transmitter.

f)

Programmable on-chip adapting of 50

and 75 Ohm with no external elements involved.

g)

Programmed serial and parallel

coupling of transmitter and receiver to test their integrity.

h)

Programmed mode of the

separator-symbols detection (comma detection) and other checks of the input

signal (8B/10B) integrity.

7.2. Architecture

Survey

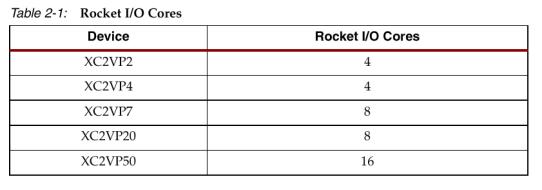

Rocket I/O transceivers are based on MindSpeed’s SkyRail technology.

Table 2-1 shows numbers of Rocket I/O transmitters in different types of FPGA

packages of Xilinx.

The system can work in the serial data transmitting mode at any baud

rate within the range of 500Mb/s – 3.125Mb/s. The data transmission rate may

not be preset as it could be clocked by received signal. Data is transferred

via one, two or four Rocket I/O channels. Different protocols are possible for

the Rocket I/O data transmission.

There two ways for selection of the data transfer protocol

a.

Changing of static attributes in the

HDL code.

b.

Dynamic changing of the attributes

through protocol ports.

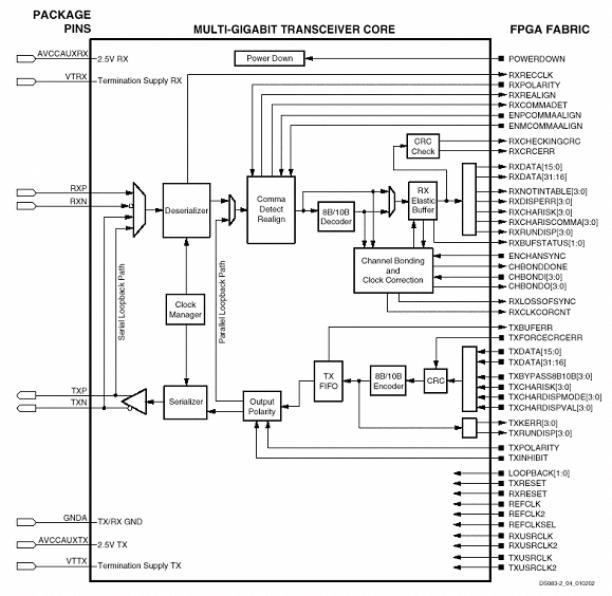

Rocket I/O transceiver consists of two part-sides:

a.

Side for connection of physical data

link lines (on the left of Fig. 7)

b.

Program code side (on the right)

Fig.7. Rocket I/O transceiver block diagram

The left side comprises Serializer/deserializer (SERDES), TX and RX buffers,

reference clock frequency generator, and the input signal frequency recovery

circuit.

The right side contains the 8B/10B codec, resizable buffer for the phase

shift recovery and combining of signals when transmission uses 2 and 4

channels. The carrier frequency is higher than the reference clock frequency at

the REFCLK pin 20 times.

7.2.1. Inner

Frequency Synthesizer

The input signal carrier frequency is measured by the Clock/data

recovery system (CRS). This circuit an on-chip phase-stable oscillator with no

external elements involved. The CRS recovers either the input signal frequency

or its phase. The obtained frequency is divided by 20 and written to RXRECCLK

register.

The REFCLK, RXRECCLK values have no phase locking neither between

themselves or other frequencies, if not otherwise specified. If data is

received over one- or four-byte channel RXUSRCLK and RXUSRCLK2 have different

frequencies (1:2). The lower frequency leading edge is locked to the higher

frequency trailing edge. This is either valid for TXUSRCLK and TXUSRCLK2.

The CDR system is automatically locked to REFCLK, should no input signal

occur. Correct performance demands that deviation between REFCLK, TXUSRCLK,

RXUSRCLK and RXRECCLK frequencies be not greater then 100 ppm. To comply

with the requirement it is necessary to take steps to suppress the power supply

noise and eliminate the signal lines’ interference with regards to the ground

and each other.

7.2.2.

Transmitter

FPGA may transfer 1,2 or 4 symbols. Each symbol can have 8 or 10 bits.

If an 8-bit signal is used then the rest additional signals are engaged in 8В/10В codec control. But if the

codec is off, i.e. a signal passes it by, then 10 bits of the mentioned signal

are transferred in the following sequence:

TXCHARDISPMODE[0]

TXCHARDISPVAL[0]

TXDATA[7:0]

Now some more detail about the 8В/10В codec

The codec uses 256 similar data symbols and 12 control symbols, used for

protocols of Gigabit Ethernet, XAUI, Fiber Channel, InfiniBand. The codec is

input with 8 data bits and one special bit – “K”-symbol. On the one hand the

codec checks the data transfer validity and sets up the so called serial line

DC-balance on the other hand. The system may be excluded from the data transfer

path.

Transceiver has the parity check system. Both this system and the 8B/10B

system directly deal with TXCHARDISPMODE and TXCHARDISPVAL signals.

7.2.3. Output FIFO buffer

The device normal functioning is only possible when FPGA reference

frequency (TXUSRCLK) is locked to REFCLK. Only one cycle phase shift is

permitted. The FIFO has a depth of four. Error signal is generated if the buffer

is overflowed or underflowed.

7.2.4. Serializer

The transceiver converts parallel data into serial one, while the lower

bit is transferred first. The carrier frequency is – the REFCLK multiplied by

20. The TXP and TXN electrical polarity can be preset in TXPOLARITY port. This

is necessary when the differential signals had been swapped over at one board.

8.

Redundancy interface

The key point of the computing system hardware part development is the

choice of a general bus. This bus was chosen to be the General Interconnect Bus

(GIB) Ver.1.02, allowing redundancy. The bus is physically implemented with

Z-PACK connectors with feedthrough junctions (connection of the rear-pin type).

The bus carries out two functions.

a) The bus is designated to interconnect the main boards plugged to the

backplane. Fig. 6 gives an example of six transceivers involved. The figure

demonstrates that one board’s failure does not effect availability of the

others.

b) The bus must provide exchange between main Board A and the auxiliary

Board B witch are connected to the backplane C.

It should be pointed out that if 12 Rocket I/O transceivers are

used the redundancy problem is obviously solved. The regular operation mode

involves 6 transceivers while other 6 are reserved. Thus the twofold data link

redundancy is provided.

Fig.8. Diagram of interconnection between basic A

boards

Note that such an interconnection preserves the communication integrity

for any two boards even in case of failure of two neighboring boards between

them (Fig.8).

Conclusions

The preprint presents the hardware part of developed

results of the project creating the fail-safe BCS to control both spacecraft

and scientific equipment complex. Requirements to the BCS hardware part are

defined. The structure scheme and contents of the base SSC complex are

substantiated. The BCS architecture and the schematic diagram are defined. The

hyperspectral system operation principle is considered. Description of the BCS

boards’ trunk interface and the data-link characteristics is given. The BCS’s

backplane board build-up principle providing relay from any malfunction board

is described.

References

1.

Воронцов Д.В., Орлов А.Г., Калинин А.П., Родионов А.И.,

Шилов И.Б., Родионов

И.Д., Любимов В.Н., Осипов А.Ф., Дубровицкий Д.Ю., Яковлев Б.А., Оценка

спектрального и пространственного разрешения гиперспектрометра АГСМТ-1. М.:

Институт проблем механики РАН, 2002. Пр‑704. 35с. (Vorontzov D.V., Orlov A.G., Kalinin A.P., Rodionov A.I., et al. Estimate

of spectral and spatial resolution for AGSMT-1 hyperspectrometer, Institute for

Problems of Mechanics of RAS, Preprint №704).

2.

Белов А.А., Воронцов

Д.В., Дубровицкий Д.Ю., Калинин А.П., Любимов В.Н.,

Макриденко Л.А., Овчинников М.Ю., Орлов А.Г., Осипов А.Ф., Полищук Г.М.,

Пономарев А.А., Родионов И.Д., Родионов А.И., Салихов Р.С., Сеник H.А., Хренов Н.Н., Малый космический аппарат

«Астрогон-Вулкан» гиперспектрального дистанционного

мониторинга высокого разрешения. М.: Институт проблем механики РАН, 2003. Пр‑726. 32с. (A.A.Belov, D.V.Vorontzov, D.Yu.Dubovitzkiy, A.P.Kalinin, V.N.Lyubimov, L.A.Makridenko, M.Yu.Ovchinnikov, A.G.Orlov, A.F.Osipov, G.M.Polischuk, A.A.Ponomarev, I.D.Rodionov, A.I.Rodionov, R.S.Salikhov, N.A.Senik, N.N.Khrenov. Astrogon-Vulkan” small spacecraft for high resolution remote hyperspectral monitoring. Institute for Problems of Mechanics of RAS, Preprint №726).

3.

Воронцов Д.В.,

Орлов А.Г., Калинин А.П., Родионов А.И., Родионов И.Д.,

Шилов И.Б., Любимов В.Н., Осипов А.Ф., Использование

гиперспектральных измерений для дистанционного зондирования Земли. М.: Институт

проблем механики РАН, 2002. Пр‑702. 35с. (D.V.Vorontzov, A.G.Orlov,

A.P.Kalinin, A.I.Rodionov, I.D.Rodionov, I.B.Shilov, V.N.Lyubimov, A.F.Osipov.

Use of Hyperspectral Measurements for Remote Earth Sensing, Institute for Problems of Mechanics of

RAS, Preprint №702.).